In the development sector, good intentions are not in short supply. Across continents and communities, resources are mobilized, projects are launched, activities are executed, and compelling stories of change are told. Reports are written, numbers are presented, and success is often implied. But beneath the narratives lies a more consequential question, one that separates motion from progress:

How do we know development is actually working?

For donors, policymakers, and practitioners, this is not philosophical. It is fiduciary, ethical, and strategic. Development must be measurable, transparent, and accountable to both the communities it serves and the institutions that fund it. Without this rigour, even the most well-intentioned interventions risk inefficiency, irrelevance, or worse, harm.

Activity vs Impact: The Critical Distinction

One of the most persistent weaknesses in development practice is the conflation of activity with impact. For instance,

- 10,000 people trained is an activity metric

- 10,000 people who increased income by 40% within 12 months is an impact metric

- 50 clinics built is an output

- 30% reduction in maternal mortality over three years is impact

In essence, activities describe what was done. Impact demonstrates what changed. This distinction is not semantic; it is structural. It determines whether development becomes a cycle of perpetual implementation or a pathway to sustainable transformation.

Activity metrics, while necessary, are leading indicators of effort. They signal scale and operational capacity but do not, on their own, validate effectiveness. Impact metrics, by contrast, are indicators of change; they capture outcomes, behavioural shifts, and system-level improvements. High-performing institutions understand that activity without outcome validation creates a dangerous illusion of success. This is why for us, impact is not assumed; it is demonstrated, measured, and verified.

Impact Measurement as a Moral and Strategic Imperative

If development is to function as a discipline, not merely a noble pursuit, it must be governed by evidence.

When impact is not rigorously measured:

- Interventions may unintentionally reinforce dependency rather than enable empowerment

- Resources risk being allocated to ineffective or redundant programs

- Vulnerable populations may remain underserved despite visible “activity.”

- Policymakers lack credible evidence to scale solutions

- Donors are left with funding assumptions instead of outcomes

At its core, impact measurement is not about data collection; it is about truth-seeking. It interrogates whether interventions produce meaningful, sustained improvements in people’s lives. It asks the real questions:

- Are people healthier, not just treated?

- Are incomes rising sustainably, not temporarily supplemented?

- Are systems strengthened, not merely supported?

This is where Monitoring and Evaluation (M&E) becomes indispensable.

- Monitoring ensures implementation fidelity, tracking whether activities occur as planned

- Evaluation assesses effectiveness, determining whether those activities translate into measurable outcomes

Together, they create a continuous feedback loop that enables learning, adaptation, and performance improvement.

Good intentions do not guarantee good outcomes. Evidence does.

Accountability as Trust Capital

In the development ecosystem, trust is currency and accountability is how it is earned, sustained, and scaled. Accountability transforms reporting from a procedural obligation into trust capital. When systems are transparent and verifiable, they:

- Strengthen donor confidence

- Enhance institutional credibility

- Enable policy adoption and scale

- Protect communities from ineffective or extractive interventions

If measurement tells us what is happening, accountability defines who it matters to and who has the right to question it.

True accountability operates in two directions:

- Upward accountability to donors and funders, ensuring responsible stewardship of resources

- Downward accountability to communities, ensuring interventions are responsive, respectful, and aligned with real needs

Organizations that embed both do more than deliver programs; they build relationships:

- Communities become partners, not passive recipients

- Donors become collaborators, not just financiers

- Programs become adaptive systems, not rigid structures

Accountability, in this sense, transforms development from a transaction into a shared enterprise of outcomes.

Monitoring & Evaluation: From Compliance to Strategy

Too often, Monitoring & Evaluation is treated as an end-of-project requirement, a reporting checkbox. This is a strategic error. High-performing institutions recognize that M&E is not retrospective; it is predictive. When embedded from program design through implementation, it becomes a decision-making engine.

Effective M&E systems enable organizations to:

- Articulate clear theories of change

- Define measurable indicators from inception

- Establish baselines for comparison

- Track progress in real time

- Adapt interventions based on emerging evidence

- Demonstrate attributable impact with credibility

In this framework, M&E does not merely validate performance; it improves it while programs are still in motion. At JEF, M&E is not an appendage to programming; it is integrated into the institutional architecture. Our approach combines:

- Alignment with globally recognized frameworks (including OECD-DAC criteria and Results-Based Management principles)

- Baseline establishment and indicator clarity

- Mixed-method data collection (quantitative and qualitative)

- Institutionalized community feedback loops

- Periodic evaluations focused on sustainability, not just completion

Data, for us, is not collected for reporting; it is collected for alignment, refinement, and decision-making.

From Data to Decisions: The Real Value of Measurement

Impact measurement only becomes valuable when it informs action. Its true power lies in its ability to:

- Guide strategy: identifying which interventions to scale, adapt, or discontinue

- Improve efficiency: directing resources toward high-impact outcomes

- Strengthen advocacy: providing credible evidence to influence policy and attract investment

Credibility by Design: An Institutional Approach

Credibility in development is not built on aspiration; it is built on systems. JEF’s Monitoring & Evaluation framework is structured around:

- Clear development hypotheses and baseline identification

- Defined output, outcome, and impact indicators

- Real-time monitoring systems

- Independent evaluation mechanisms

- Transparent stakeholder reporting

- Continuous learning and adaptation loops

This architecture ensures that impact is not inferred; it is traceable, verifiable, and scalable.

Evidence from the Field: Moving Beyond Activity Metrics (Q4 2025 – Q1 2026)

Across our interventions, we intentionally move beyond reporting activities to demonstrating measurable outcomes. Here’s a snapshot.

1. Amaokwe Item, Abia State: Efficient Service Delivery and Measurable Health Impact

Activity Metrics

- 3,463 patients received medical care.

- 6,556 medical interventions were administered.

Impact Metrics

- Comprehensive andholistic healthcare delivery

- Improved health outcomes throughmulti-layered interventions

- Reduced barriers to access

- Strengthened community health confidence

- Data-Driven health insights

Evaluation Insight: Impact transcended beyond superficial treatment to depth of care and effectiveness of intervention design.

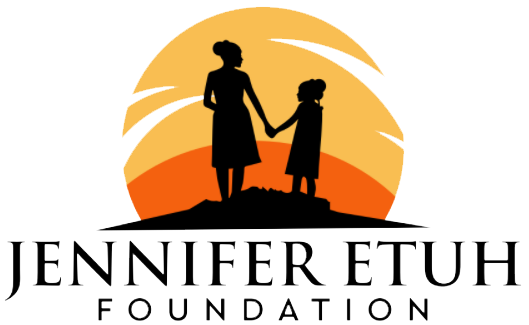

2. Tula, Gombe State: Healthcare Access with Data Intelligence

Activity Metrics

- 3,079 patients treated in a free medical outreach

- 8,428 medical interventions delivered

Impact Metrics

Beyond service counts, structured data collection enabled:

- Disease pattern tracking

- Identification of high-burden conditions

- Referral pathway optimization

- Transparent stakeholder reporting

Evaluation Insight: Activity data was converted into epidemiological intelligence, strengthening both immediate response and future planning

3. Mallagum-Kagoro, Kaduna State: Economic Empowerment with Measurable Returns

Activity Metrics

- Over 2,000 women trained in skills and vertical sack farming

Impact Metrics

- Over 80% recorded a 97% increase in plant yield

- Documented increases in household income

Evaluation Insight: Training was linked to productivity and income outcomes, demonstrating a clear pathway from intervention to economic resilience

4. Kagoro, Kaduna State: Health Financing as Systemic Impact

Activity Metrics

- 1,000 widows enrolled in the Kaduna State Contributory Health Care Scheme (KADCHMA).

- 3,813 patients treated in a free medical outreach

- 8,511 interventions delivered

Impact Metrics

- Sustained access to primary and secondary healthcare

- Reduced out-of-pocket expenditure risk

- Improved household health security

Evaluation Insight: This intervention moved beyond episodic care to system-level protection, a stronger indicator of long-term impact

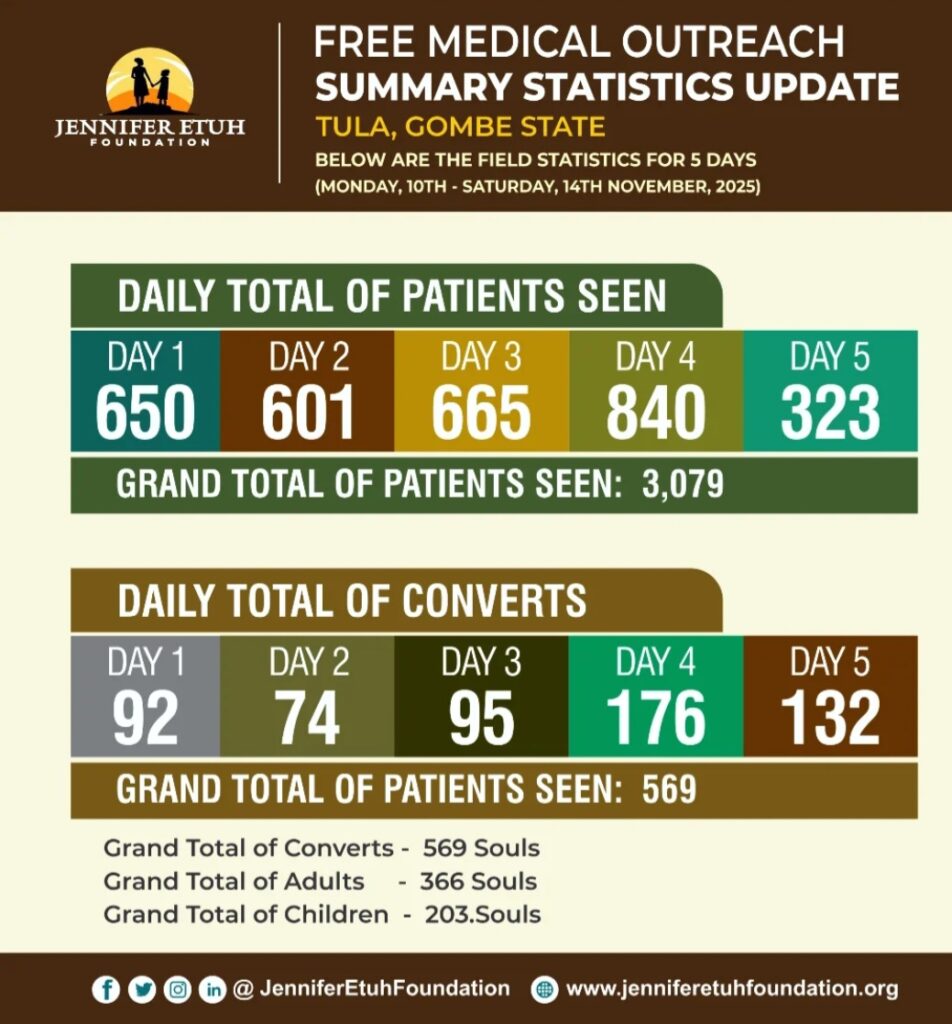

5. Odu-Ogboyaga, Kogi State: Integrated Community Support

Activity Metrics

- 1,200 widows supported through a widows’ outreach

- 3,805 patients treated at a free medical outreach

- 10,097 interventions delivered

Impact Metrics

- Improved household stability

- Enhanced community productivity

Evaluation Insight: Multi-dimensional interventions produced compounded social outcomes, demonstrating the value of integrated programming

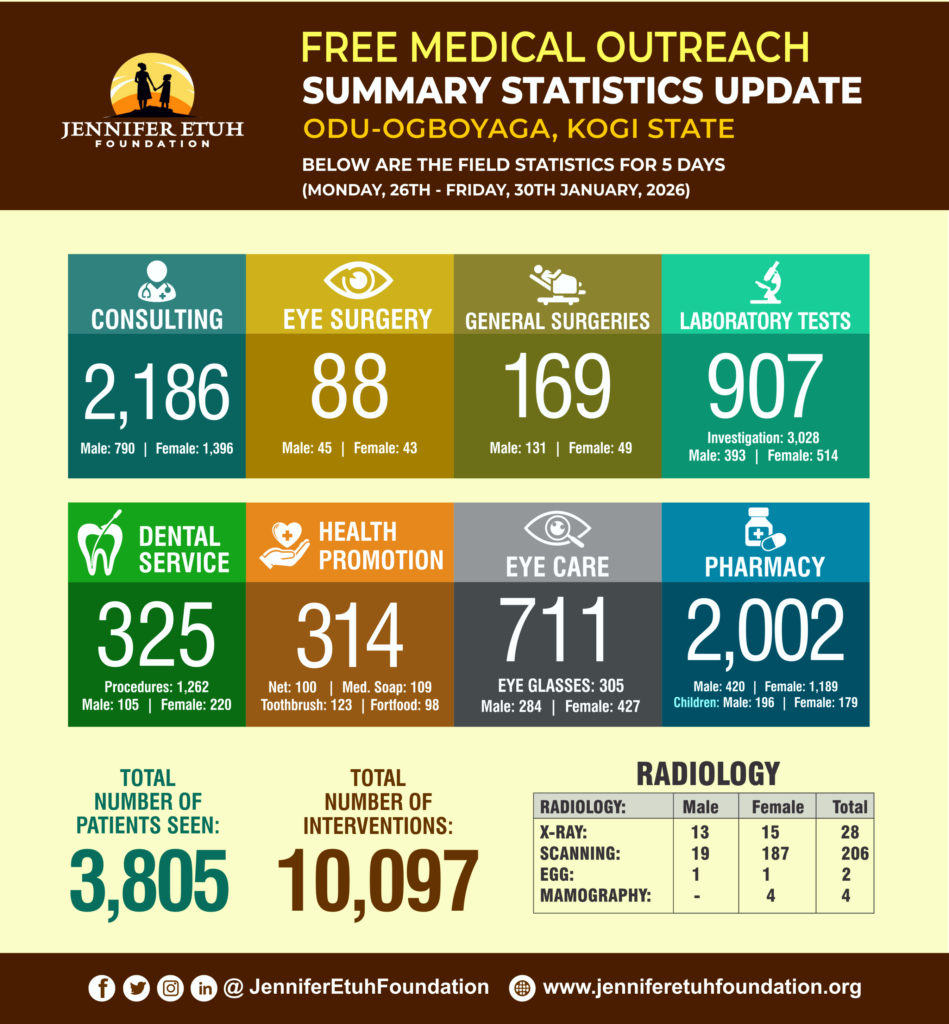

6. Umunoha, Imo State: Inclusive Healthcare Delivery

Activity Metrics

- 3,127 patients treated at a free medical outreach

- 7,548 interventions delivered

Impact Metrics

- Increased inclusion of persons with disabilities

- Reduced malaria risk through preventive education and tools

Evaluation Insight: Impact extended beyond treatment to inclusion and prevention, key markers of sustainable health outcomes

7. Specialized Surgical Interventions: Restoring Dignity

Activity Metrics

- 89 patients treated via a specialized surgical outreach

Impact Metrics

- Resolution of life-threatening conditions

- Restoration of dignity and functionality

Evaluation Insight: Targeted, high-value interventions demonstrated deep impact at the individual level, complementing broader population reach

A Necessary Sectoral Shift

Looking into the future, one thing is evident: development will not be defined by the number of projects implemented, but by the quality, depth, and measurability of change achieved. If development cannot clearly answer:

- What changed?

- By how much?

- For whom?

- And for how long? then it must evolve.

At JEF, we are deliberate about building systems where impact is measurable, accountability is embedded, and transparency is non-negotiable. Because when impact is measured, development becomes scalable. When accountability is embedded, trust becomes durable. And when evidence leads, transformation becomes undeniable.

That is how we know development is working.